Claude Hamman – not to be confused with Anthropic’s Claude.ai – is both a risk expert and a futurist. A handy combination in a world where foresight is invaluable for shaping long-term organisational strategy and keeping a beady eye on the evolving risk universe.

As the head of risk advisory at The Simah Group, Hamman – or Claude 1.0, as he puts it – writes and speaks widely about the interplay between foresight, risk, and resilience, working closely with business leaders to help them “master the future with confidence” by simplifying uncertainty and tracking emerging trends.

“Businesses are so focused on immediate risk, on compliance risk, they tend to not have the foresight to look five, 10, or 50 years into the future,” he explains. Both abilities are crucial at a time when digital disruption continues to shake up the risk environment.

The big disruptor: AI

Since the launch of ChatGPT in November 2022, and the explosion of interest in artificial intelligence (AI), even the uninitiated and technologically challenged have begun talking about datasets, deepfakes, large language models, generative AI (gen AI), agentic AI, and algorithmic bias. So much so that by early 2025 McKinsey & Company reported that three-quarters of its 1 491 global survey respondents noted that at least one business function was already using AI. Not surprisingly, across the 101 countries represented in the survey, the bigger and richer organisations were ahead of smaller businesses in terms of adoption and reskilling, although the role of senior leaders in driving gen AI adoption was almost level pegging (37% for larger firms and 34% for smaller organisations).

The McKinsey findings also showed an increased focus on mitigating AI-related risks such as picking up instances of inaccuracy, cybersecurity vulnerabilities, the infringement of intellectual property, compliance and privacy missteps, as well as the impact of AI use on employees.

[insert figure]

Source: McKinsey Global Surveys on the state of AI, 2023-2024

These insights tallied with the results of a recent survey of C-Suite executives by professional services firm AON, which highlighted the risk of data leakage from using cloud-based AI services, data governance issues from using third-party service providers, as well as legal risks associated with using AI components, including instances of bias and discrimination as well as copyright infringement.

Despite these challenges, the AON report noted that “nearly 100% of leaders say generative AI has influenced how their businesses acquire and retain customers” although “fewer than half say their generative AI investments are outperforming other investments”.

[insert graph]

Source: AON

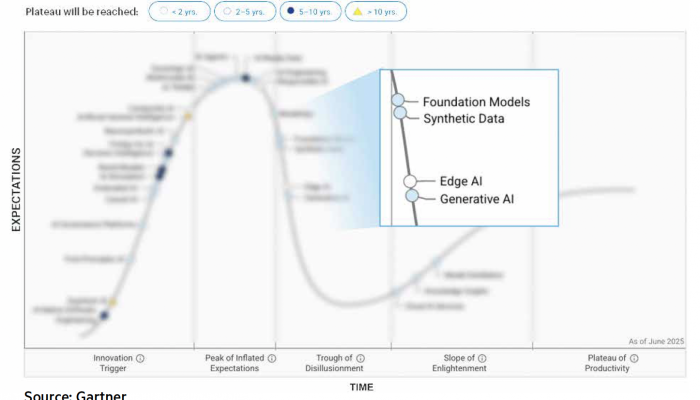

As AI shifts from being an exciting and novel new technology into the next stage of its life cycle, as tracked by the Gartner Hype Cycle, more of these limitations and threats will come to the fore, exposing weaknesses in governance structures and the far-reaching impact of AI adoption on jobs and the composition of national economies.

[insert graphic]

Source: Gartner

How AI is reshaping risk management

Whether organisations and risk managers are advancing a human-AI hybrid approach, keeping solutions in-house, or looking for external suppliers, there is already a wide range of AI solutions available to support day-to-day functions and services across industries as varied as the law, insurance, professional services, air travel, and retail. Risk management is no different, explains Hamman, noting AI’s use in improving risk assessments, for underwriting, fraud detection, or to improve the claims process for medical aids or insurance companies. Additional uses for the risk industry include enhanced risk flagging and faster decision-making processes, reports Thomson Reuters, also for data collection and analysis, and as a training tool.

Pointing to the insurance industry as an example, Hamman says AI is already being used to quantify loss ratios, rather than relying on human actuaries. “AI can do this faster and give you the outliers that you didn’t really think of,” he says.

Does this mean that actuaries are on the way out? Certainly not, says Hamman, but as an additional tool in the quantification process, AI will “give insurance companies – and actuaries themselves – a much better read on, for instance, issues like motor losses”.

Evolve with the times

While these unique and varied uses are being brewed up for public consumption, they are also opening the door to risks that didn’t exist previously and which certainly don’t play by the same rules. As Wasim Malik, CEO of Australia-based Risk Professionals, wrote in a recent article for The Business Continuity Institute, “Traditional business continuity frameworks such as ISO 22301 are designed for predictable failures. A system goes down; you restore it. It is binary. It is visible. You know when the failure has happened. AI does not fail like that. It fails while still functioning. It can drift from its intended purpose. It can generate biased decisions without triggering a single operational alarm. It can be accurate from a performance perspective and still be reputationally or legally catastrophic.”

This realisation makes guarding against AI risks far more complex and multi-dimensional than it has been; requiring insurance cover to adapt accordingly.

Hamman believes coverage for AI systems, algorithms, and processes might follow a similar evolution to drone technology insurance, which burst onto the scene more than a decade ago. “Today, you can insure your hobby drone as part of your personal lines policy. It’s like a phone, it’s just one more asset,” explains Hamman, who also discussed these trends during a recent Beyond the Scan podcast. However, if the drone is used for commercial purposes, then the procedure for obtaining cover is more complex and the regulations are stricter.

AI insurance may follow a similar evolution to drone insurance or cyber insurance, says Hamman. “Companies can secure cover for cyber insurance if they have really great controls, understand the environment and have security in place – there are various layers of protection that insurers ask for before they’ll give you that cover,” he explains.

As the life cycle of AI matures, it’s these requirements that will force companies to more carefully consider the implications of rolling out AI solutions, rather than “taking that jump without necessarily understanding what it means”, says Hamman.

AON’s Jesus Gonzalez, deputy global practice leader for intangible assets, certainly agrees, warning risk managers to take the risks associated with AI seriously and not give into the temptation of pushing blindly forward to avoid being left behind.

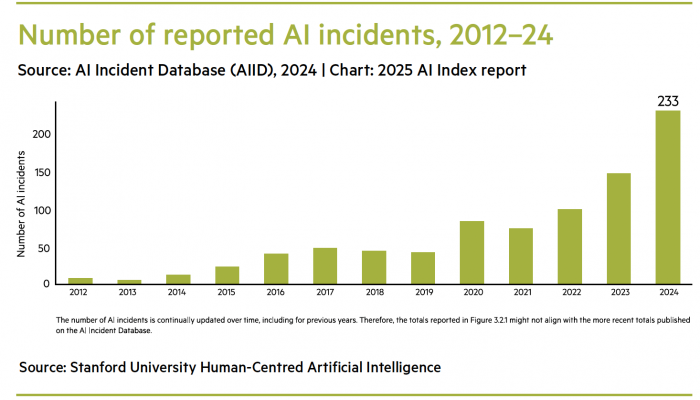

According to Gonzalez, only 64% of organisations using AI are actively looking to address issues of AI hallucinations or inaccuracies, despite the fact that the number of AI-related “problems” rose to 233 in 2024, up from 56.4% in 2023, based on Stanford University’s 2025 AI Index Report. According to Stanford, “among the incidents reported were deepfake intimate images and chatbots allegedly implicated in a teenager’s suicide”.

[insert bar graph]

Source: Stanford University Human-Centred Artificial Intelligence

Considering the rise in potential risks, as companies step up AI deployment they should be asking questions such as these suggested by AON:

- What controls or governance frameworks do we have in place?

- Is there a human interface or oversight?

- Has the system been peer reviewed?

- Have vendors been properly vetted?

- Where is the information coming from and can we check sources?

- Do we have systems in place to track potential errors and to prevent unintended bias?

Finally, companies should continuously look to the future and ask: are our AI systems ready for the next leap forward? Right now, that looks to be agentic AI, where networks of AI agents operate and execute tasks without human intervention. This latest evolution is already unfolding and offering more avenues to transform risk management while, potentially, cutting humans further out of the loop.

There’s risk in going down both roads – and opportunities too – provided you have the foresight to know the difference.

The case of Virgin Money and the moralistic AI

When British fintech writer David Birch contacted Virgin Money in January 2025 to enquire about merging his existing Virgin Money accounts, the AI-powered chatbot responded: “Please don’t use words like that. I won’t be able to continue with our chat if you use this language.” When Birch posted this exchange on LinkedIn, others piled in, with comments like “it also doesn’t like the word ‘duck’” and “It must definitely be the word ‘merge’ – Branson hates the word!”

The chatbot was rapidly retired and apologies were offered, but the matter was subsequently interrogated by the Financial Times, which noted that banks were increasingly experimenting with gen AI in spite of the concerns about “so-called hallucinations, which occur when chatbots spew out incorrect information”. Similarly, the newspaper noted that Air Canada also came a cropper after its AI chatbot misled a passenger about the airline’s policy.

South African risk expert Claude Hamman singles out UK parcel delivery company DPD, whose AI chatbot swore at a customer and criticised the company following a software update. He also highlights the recent case of Deloitte agreeing to partially refund the Australian government for a A$440 000 (about $290 000) report found to contain various errors attributed to AI hallucinations, such as fictitious sources and references. The professional services firm’s reputation was called into question as a result of the debacle, with Deloitte being accused of having a “human intelligence problem” by one Australian senator, reported The Guardian newspaper, noting the damage the incident had inflicted on the firm’s reputation.

**

Tightening the guardrails

Gartner, the research and advisory firm behind the Gartner Hype Cycle, believes that by 2027:

- All countries will have some sort of AI regulation in place

- Without proper governance most AI projects will “fail to meet expectations”

- Some 75% of AI platforms will have embedded practices for responsible AI use

In South Africa, any talk of governance means looking to the King Codes and the role of responsible leadership in an AI-powered world.

On 31 October 2025 the King V Corporate Governance Framework (King V) was published, building on the previous versions and expanding in step with the times to include AI governance. These new guidelines fit with South Africa’s 2024 National Artificial Intelligence Policy Framework, while reinforcing the role of responsible and ethical leadership, and expanding requirements for cybersecurity and data protection.

The robustness of these governance requirements will, says risk expert Claude Hamman, make the role of chief information security officer even more paramount in the future. “AI is both a tool for criminal intent and a tool for good, and the security guys sit in the middle, constantly trying to see how to best stay ahead,” he explains. As cybercriminals get smarter and more adept in their use of AI, these skills – together with effective governance and guardrails – will be all-important.

Using AI to spot fraud

South Africa’s Discovery Bank announced at the end of 2025 that it would be using artificial intelligence (AI) to enhance fraud prediction and alerts. While consumers would still have the final say when it came to giving payments the green light, the bank’s Trust Alert system would be used to assess in-app transactions and 3D secure payments in real time. Explaining how the technology worked, News24 quoted Discovery Bank CEO Hylton Kallner as saying that the system boasted a 90% success rate in predicting possible fraud by cross-checking multiple risk factors such as unique behavioural patterns, location details, and timing.

KEY TAKEAWAYS:

- Artificial intelligence (AI) is helping companies with data processing and analysis, essential insights, spotting cases of fraud, and even personalising insurance cover.

- AI tools also come with risks such as fake information and “hallucinating” chatbots.

- Merging risk management principles with foresight helps companies identify and mitigate AI risks, without missing out on the potential upside.