As an avid but suspicious user of generative AI (genAI) tools, I found myself nodding as I read an article in The Guardian titled “Are We Living in a Golden Age of Stupidity?” It explores how modern technologies from smartphones to GenAI may be thinning our attention, memory, and capacity for deep thought, and includes an experiment that found people writing with genAI displayed far lower cognitive engagement and recall than those writing unaided.

When Rhodes doctoral student (and GIBS MBA alum) Jeri-Lee Mowers then asked me for an interview about my own genAI use, I expected a conversation about tools, but she wanted to talk about what they’re doing to our minds. Her research explores how genAI is reshaping the ways people reason, judge, and make sense of the world.

Cognitive offloading – the strongest trend

Mowers has identified five distinct modes of genAI use and a widening gap between people’s capabilities and their awareness of how these tools shape their thinking. From 80 interviews with 67 participants from civil society, academia, and business, ranging from hesitant GenAI newcomers to highly skilled early adopters, Mowers says the strongest pattern in her data is cognitive offloading (outsourcing parts of our thinking to a tool or device), but with wildly uneven awareness of what people are actually handing over to genAI.

According to Mowers, interviewing “super-users” (aka silver-collar workers or bionic professionals) has been especially revealing. They’re achieving exceptional results because they treat genAI not as a shortcut, but as a cognitive partner.

Developing modal intelligence

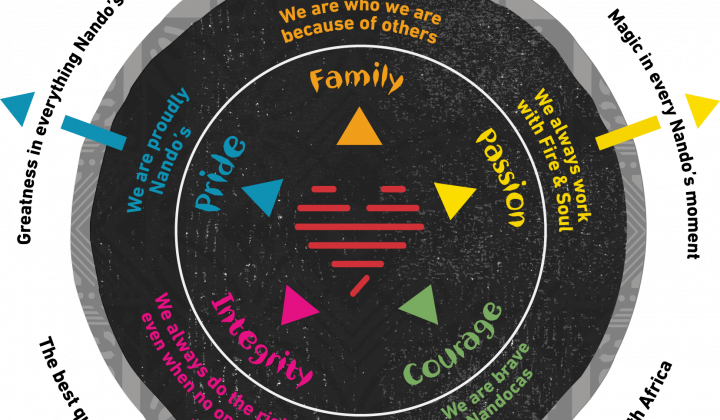

Super-users’ behaviour led Mowers to identify five modes of genAI use and a meta-skill she calls modal intelligence: the ability to shift deliberately between these modes depending on the task. Most users, she notes, remain stuck in a default mode, using genAI reactively.

“In my data, the vast majority of people use genAI as a faster search engine,” she says. For the remaining advanced users, its use is more strategic, and they switch between modes as needed.

Five modes for GenAI mastery

Across sectors, Mowers has identified five cognitive modes for using genAI between which super-users toggle as needed:

- The soul of discipline: using genAI to build ritual and consistency.

- The antidote to time: engaging with genAI with deep presence – entering a flow state, where time seems to stop.

- The archer’s aim: leveraging genAI with precise intention. Often, users rely on their own domain knowledge to prompt most effectively in pursuit of a very specific output.

- The weaver’s loom: using genAI for reflection and integration.

- The river’s flow: engaging genAI in a spontaneous and playful way, which is a potential pathway to innovation.

Cognitive clarity precedes prompting performance

“One of the most counterintuitive findings of my research so far is that although we’re constantly being told that genAI success is all about prompting, that’s actually backwards. Effective genAI partnership starts with metacognitive clarity – knowing how you think, having an idea of where your cognitive gaps are, and knowing what kind of thinking a situation requires before you even open the chat window.”

She suggests users ask themselves: What mode am I in? What am I offloading? What thinking must stay fully human?

The problem, she believes, is many users are unaware that cognitive offloading is taking place, let alone the need to protect their own thinking.

“To me, this exposes the brutal gap between how our education system has taught us what to think versus being taught how to think or to observe our own thinking,” she says.

GenAI as a mirror, not a crutch

The issue is tied to context. “In South Africa, we are navigating GenAI adoption against the backdrop of extreme inequality and a legacy of educational systems that often prioritise compliance over critical thinking – a pattern that echoes in many unequal societies, but is especially stark here,” Mowers says. “GenAI arrives promising to think for you... Many people don’t have the metacognitive infrastructure to know what to keep and what to delegate. The professionals in my study who excel alongside this technology all share one trait: they treat genAI as a mirror and not a crutch. They use it to expose and to strengthen their thinking, not replace it.”

Dr Roze Phillips, adjunct faculty member and African futurist at GIBS, says the biggest influence of genAI is ontological rather than technological. “It’s changing how people come to know things, not just what they know. I’m seeing my students turn to LLMs as their first port of call, not their last. Socrates, whose teaching gave rise to the Socratic method, believed that wisdom lives in the space between the question and the answer. That is where interrogation, interpretation, debate, provocation, judgement, and reflection happen. GenAI collapses that space. When answers arrive instantly, they can look like understanding without being understanding. It creates a kind of knowing that is fast, polished, and confident, but ultimately shallow,” she says.

She says this points to a deeper shift: seeing knowledge as something instant and individual that we consume rather than as something lived and contextual that we create, share, and from which we grow. “In that shift, I can’t help feeling that we’re losing something essential about what it means to be human.”

Why GenAI is different

“GenAI lets us offload things like judgement, sensemaking, and even moral pause – the societal provocations and frictions that help us develop and grow,” says Phillips.

In futures studies, she says, there’s a concept called Disowned Futures: the futures we say we want but quietly sacrifice because the dominant system rewards something else.

“In this case, we say we want leaders with judgement, imagination, and ethical courage. But our genAI tools make those very qualities easy to skip, giving us the appearance and illusion of thinking without the actual cognitive grappling that gives an answer its weight. And when that friction disappears, so does the moment when you usually ask yourself: ‘What is the consequence of this decision?’”

The danger, she believes, isn’t that machines start thinking for us, but that we stop doing the thinking that makes us wiser, more grounded, and more responsible. “The ethical question isn’t only about bias, fairness, explainability, or accuracy. It’s about protecting the human cognitive and moral processes that shape mature, courageous, and accountable leaders.”

Sectoral differences in genAI use

Mowers’ data shows stark sectoral differences in how people are using genAI. “Business professionals are the fastest adopters,” she says, driven by the lure of genAI as a productivity multiplier.

But speed comes with risks: “instrumental overuse” and resulting in “AI slop” – “the uninterrogated outputs people send straight into the system of work,” which affect quality downstream. One person’s shortcut, she notes, often becomes someone else’s labour.

At the same time, she’s observing a troubling emotional pattern: “People are extremely productive, generating more output than ever before, but losing the thinking and the feeling that made their work meaningful.”

Civil society participants are using genAI more cautiously. “Their resistance does not appear to be technophobia. It’s ethical vigilance,” Mowers says. The risk is falling behind; the opportunity is becoming the conscience of genAI adoption.

Academics are the most conflicted group. Enthusiasm declines the further one moves from ICT disciplines, and anxiety about “intellectual displacement” runs deep. Some fear that if genAI can summarise, synthesise, and even write, “what then becomes the role of a scholar?”

Yet there are also pockets of extraordinary innovation, Mowers says, with researchers using genAI to scale tasks (such as library management) in ways previously thought impossible.

Academic genAI approaches

Mowers’ interviews suggest the divide between universities embracing GenAI and those gatekeeping it has little to do with resources and everything to do with leadership courage and institutional culture. She points to three local institutions leading the way: North-West University, which made a strategic decision early on “to learn in public”, and UCT and Rhodes University, which switched off their generative-AI detectors after acknowledging the negative effects on the learning environment.

What sets these universities apart, she says, is framing genAI as pedagogy rather than productivity. “They’re not asking, ‘How can we use GenAI?’ They’re asking, ‘What kind of thinking do we want our students to develop, and how can GenAI support that?’”

This echoes her broader findings on the need for metacognitive clarity: the quality of the questions we ask determines the quality of the partnership we build with the tool.

She also highlights institutions that are creating sandbox environments where faculty can “try, fail, and learn without career risk” – a principle she sees as essential to academic life. By contrast, tools such as Turnitin’s genAI detector, she argues, have created a “presumed guilty” environment that undermines the very purpose of a university.

The third distinguishing feature is early, explicit engagement with ethics. Leading institutions address questions of integrity, bias and equity from day one, rather than deferring them. Meanwhile, many universities remain stuck in “policy paralysis”, waiting for perfect guidelines while students are already using GenAI “without institutional guidance – perhaps the worst of both worlds.”

Phillips says the task in business schools isn’t to ban genAI; it’s to teach with it. “In my classrooms, genAI is a sparring partner. Students should critique it, challenge it, ask what’s missing and examine the assumptions beneath the output. Even a simple question like ‘What alternative futures could grow from this?’ shifts them from prediction to possibility.”

This, she says, leads to the question that should occupy us: who do we become in the future we are shaping?

“The leaders Africa needs will be rooted in context, resilient to friction, rich in imagination, grounded in judgement, and courageous in action. We need people who use technology to amplify their humanity, not those who allow its shortcuts to shape who they become. When convenience replaces consciousness, we lose the human capacities that make civilisation possible. We lose knowledge, judgement, memory, meaning, imagination, and ultimately wisdom.”

Skills to succeed in working with genAI

Mowers has identified three non-negotiable adaptive capabilities for working well with genAI: metacognitive awareness, modal agility, and ethical discernment. Together, they form what she calls modal intelligence: the internal operating system for working well with genAI.

For business leaders, this demands a shift in what they optimise for. “If you’re still chasing efficiency at all odds, you are literally training people to be redundant.” In contrast, teams built on modal intelligence create “a not easily replicated, durable competitive advantage”.

Her message is clear: the future belongs not to those who use genAI fastest, but to those who know what not to offload, and why.