Conversations on AI tend to focus on the class of conspicuous harms, where a clear line could be drawn from the cause to the effects of the problem as direct consequences of the AI systems. Here, attention is given to algorithmic bias, security and privacy concerns, concerns around AI replacing jobs, and the uncertainty about AI’s potential value and benefits. (Business) leaders tend to focus on this class of problems. However, there are promising signs that these concerns will be addressed, to some extent, through regulations and ethical codes.

Here, I share three lessons for the future that go beyond conspicuous harms caused by the development and deployment of AI systems.

The first is that everything is connected.

The second is that complex problems require that we think and act slowly.

The third is that it is within our power to create our desired future.

These lessons are informed by my work as a systems-change expert working to solve pressing intractable social challenges and by my deep academic expertise in AI.

There are certain suppositions worth naming without going into the lessons:

- The relevance of AI is undeniable. It is here to stay.

- AI is not deterministic — whether it produces good or harm depends on our choices.

- In some instances, AI will democratise access and opportunities, and in other cases, it will exacerbate existing societal bias.

- The underlying norms and values that inform the development of AI systems are often Western, irrespective of whether Africans or other non-dominantly situated people create the technology. Some of these technologies can be oppressive when causally intertwined with an oppressive system and shape a person’s psychological processes and social structures.

Lesson 1: Everything is connected

There is a never-ending call for the ethical development and deployment of AI systems. This growing call often ignores the context and treats AI as operating in a vacuum. There is compelling evidence that people rarely act based on ethical principles. In 2019, Funda Ustek-Spilda et al showed that Internet of Things developers operating within a social milieu that prizes innovation, market share, and corporate reputation engage with ethical or moral concerns around three “action positions". They either engage with ethics in a disengaged way (ethics is bad for business), a pragmatist way (doing ethics can appease customers and drive profit) or an idealist way (ethical concerns are to be taken seriously and are not to be subsumed by business interests).

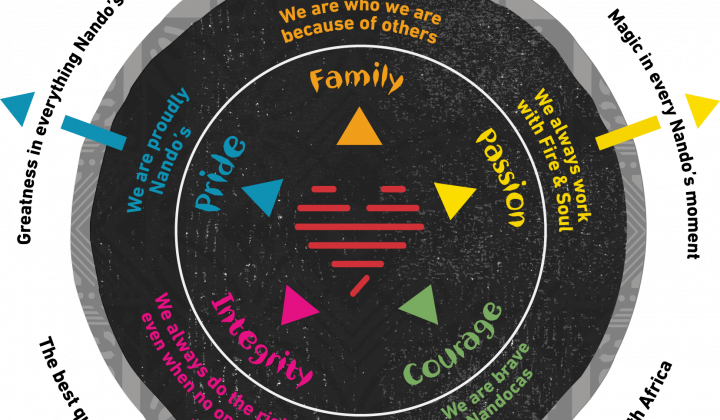

The three action positions show that our choices are often and largely shaped by our context — context guides and constrains what is possible. An organisation’s rules and practices, whether explicit or implicit, limit or enable the ethical development and deployment of AI systems.

Beyond the ethical codes that business leaders might subscribe to, if the prevailing core values of the company centre profit as the fundamental goal without a deep commitment to the respect of the individual and responsibilities to the communities in which they operate, developing and deploying AI in a just way is impossible.

A commitment to a systems thinking orientation is a prerequisite for AI to be a force for good. Systems thinking is an orientation of considering and attending to the interactions and relationships in a complex system. Systems thinking attends to the whole context. For example, narrow, linear attention to intelligent automation or using AI to complement workers' skills through labour and capital augmentation can lead to cost efficiency and profitability. Still, it might produce ills that extend beyond the boundaries of a given organisation. Leaders, more than ever, must now live systems thinking and not merely use it as a tool. They must stay alert to the intricate interdependencies and interrelationships within which AI systems operate.

A deep commitment to systems thinking would require leaders to make some hard choices — forfeiting short-term institutional success for a long-term and sustainable future for all. For this to be possible, they must collaborate within and across sectors to align incentives. There is a need for trans-sector frameworks that centre justice for people and the planet; otherwise, there is no incentive for corporations or businesses to do the right thing within a largely competitive landscape.

Lesson 2: Don't be fooled by the speed and scale that AI guarantees; complex problems require that we think and act slowly.

AI comes with the allure of speed and scale. For example, AI offers promises of scaling learning to children in rural Limpopo. It performs tasks beyond the capacities of humans at greater speed and scale. This might tempt us to think that technical solutions, driven by AI, are the way to solve our world’s challenges, ranging from mitigating the worst effects of climate change to addressing inequality, ending poverty, and strengthening our democracies.

AI is not the panacea for solving intractable social problems. It has its place in the overall equation, but we will fail drastically if it is approached as the sole solution. In Greek mythology, there is a story of Procrustes who would offer a bed to wandering guests, but he would ensure that the guests fit "perfectly" into the bed. The shorter guests would be stretched, contorting their bodies to fit the bed, and the taller guests’ legs would be cut off to fit into his iron bed.

There is a growing danger of AI systems being used as a Procrustean bed — offered as the silver bullet to any and all problems while ignoring the specificities and complexities inherent in complex systems. In my experience of working in social systems, whether it is food, water, gender-based violence, education, health, inclusive insurance or strengthening democracy often in conflict-affected areas, these are issues that require that we think and act slowly by understanding their systemic nature, build the requisite trust that would enable diverse actors to move forward together, and act together.

The call to slow down might seem counterintuitive to the need for speed as we work to avert the worst dangers of climate change and growing inequality in many parts of the world. Unfortunately, despite the urgent need for speed to our climate crisis, fast and easy solutions will not guarantee the needed change. The paradox of sustainable development is that we need to slow down in order to speed up.

While AI might offer speed in certain respects, we must be attentive to the following dangers:

- Technical solutions often stifle collective agency and narrow the responsibility field, reinforcing the hierarchy between providers of "solutions" and "beneficiaries". Often, the providers are not meaningfully connected to the problem and end up producing negative (un)intended consequences.

- Technical solutions promote one way of knowing and being. Addressing our pressing challenges requires giving a prominent place to historically marginalised knowledge, particularly because our current challenges were birthed and are sustained by the Western imaginary and episteme. Audre Lorde, the intersectional feminist and poet, was clear about the limitations of working from within the dominant playing field: “For the master's tools will never dismantle the master's house. They may allow us temporarily to beat him at his own game, but they will never enable us to bring about genuine change.” (Lorde, A. (1984). Sister Outsider: Essays and Speeches by Audre Lorde. Freedom, CA: The Crossing Press, pg. 1984: 112)

- Technical solutions pursue quick fixes without interrogating and challenging the underlying systems and structures that have produced the problems in the first place.

Lesson 3: Create the future you want

It is very likely that in the next decade, your medical operation will be performed by a robot. It is even more likely that many will lose their jobs due to AI advances, and new jobs will emerge. These fast-moving developments cause both justifiable apprehension and excitement when the advances in AI tech are contemplated.

Advancements in AI are often framed as what will happen to us. On the contrary, human forces develop and deploy these technologies. The people driving innovations in AI are not bad actors — they are creating the future they want, albeit the power asymmetry between the big tech corporations who drive these innovations and other actors seems to be widening every day.

How do we move the conversation from where we are portrayed as passive recipients to where we conceive of ourselves as active citizens?

By building collective power with diverse actors, we can shape the future we want. One of my favourite quotes, often attributed to Peter Drucker, is that “the best way to predict the future is to create it”. It might be common to feel overwhelmed by the uncertainty the age of AI will bring. Increasingly, algorithms will continue mediating social processes, business transactions, governmental decisions, and how we perceive, understand, and interact among ourselves and the environment. The invitation is not to resist it but to actively shape the future we want that centres justice and equity for all people and the planet.

We are just getting to grips with the immense potential of AI with the recent explosion of AI systems, such as generative AI models like ChatGPT and Bard, driven by sophisticated machine learning algorithms. The anticipated potential of AI systems to help solve human and ecological problems will only be achieved by recognising and attending to the interrelationships of the various aspects of the AI ecosystem, thinking and acting slowly, and stepping into our power to create the future we want.

Akanimo Akpan is a senior consultant at Reos Partners, Johannesburg. He works in the area of complex adaptive problems in various social systems, designing and facilitating cross-sectoral multi-stakeholder engagements in justice transformation, water resilience, food security, education reforms, and energy transition. He recently obtained his PhD in Philosophy from the University of Johannesburg, with a thesis titled "Decolonising Algorithms: Towards the Making of Epistemically Just Algorithms." He is a member of the African Centre for Epistemology and Philosophy of Science.